About the author

Gonzalo Gomez

AI & Automation Specialist

I design AI-powered communication systems. My work focuses on voice agents, WhatsApp chatbots, AI assistants, and workflow automation built primarily on Twilio, n8n, and modern LLMs like OpenAI and Claude. Over the past 7 years, I've shipped 30+ automation projects handling 250k+ monthly interactions.

Subscribe to my newsletter

If you enjoy the content that I make, you can subscribe and receive insightful information through email. No spam is going to be sent, just updates about interesting posts or specialized content that I talk about.

Introduction

Most AI assistant tutorials focus on prompts or models.

In production, that is rarely the hard part.

The real challenge is building a system that:

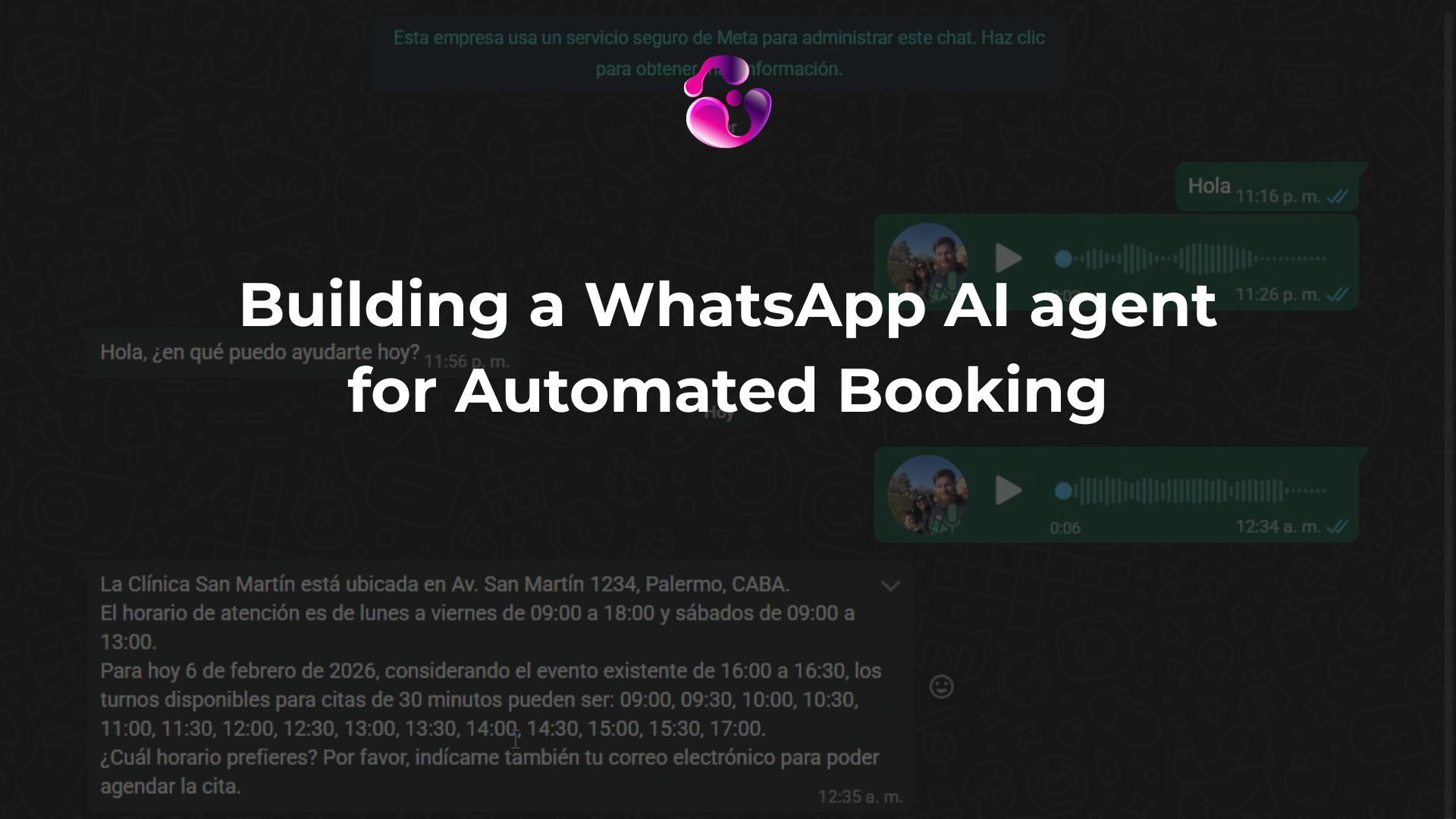

- accepts multiple input formats (text and voice)

- understands intent

- validates real-world constraints (calendar availability)

- executes actions safely

- maintains conversational context.

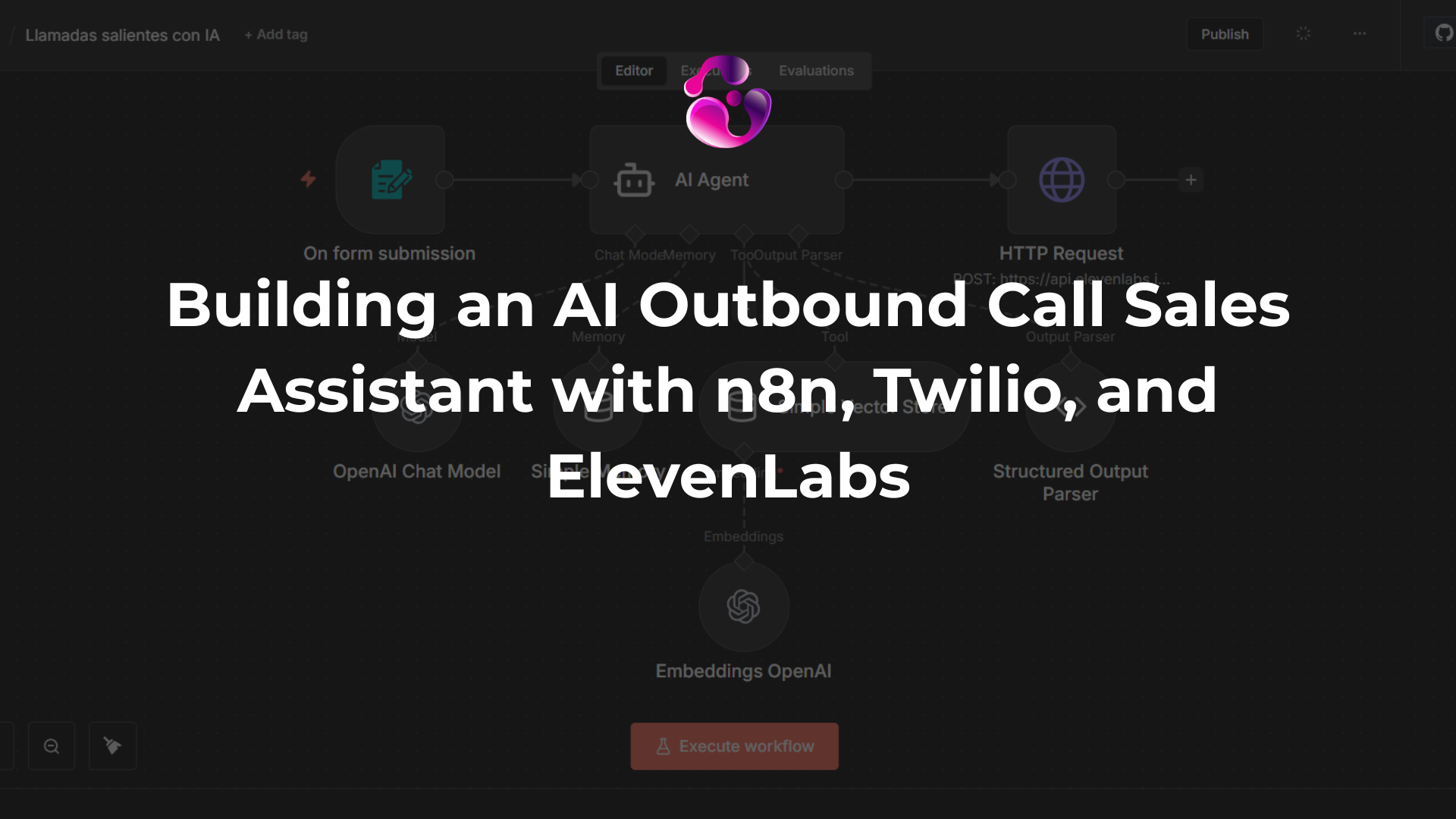

In this article, I’ll break down the full architecture behind a WhatsApp AI agent that automatically schedules appointments.

Instead of a step-by-step tutorial, this is a practical engineering walkthrough:

- system architecture

- workflow design decisions

- real node configurations

- selected JSON snippets from the production flow.

The Full Flow Overview

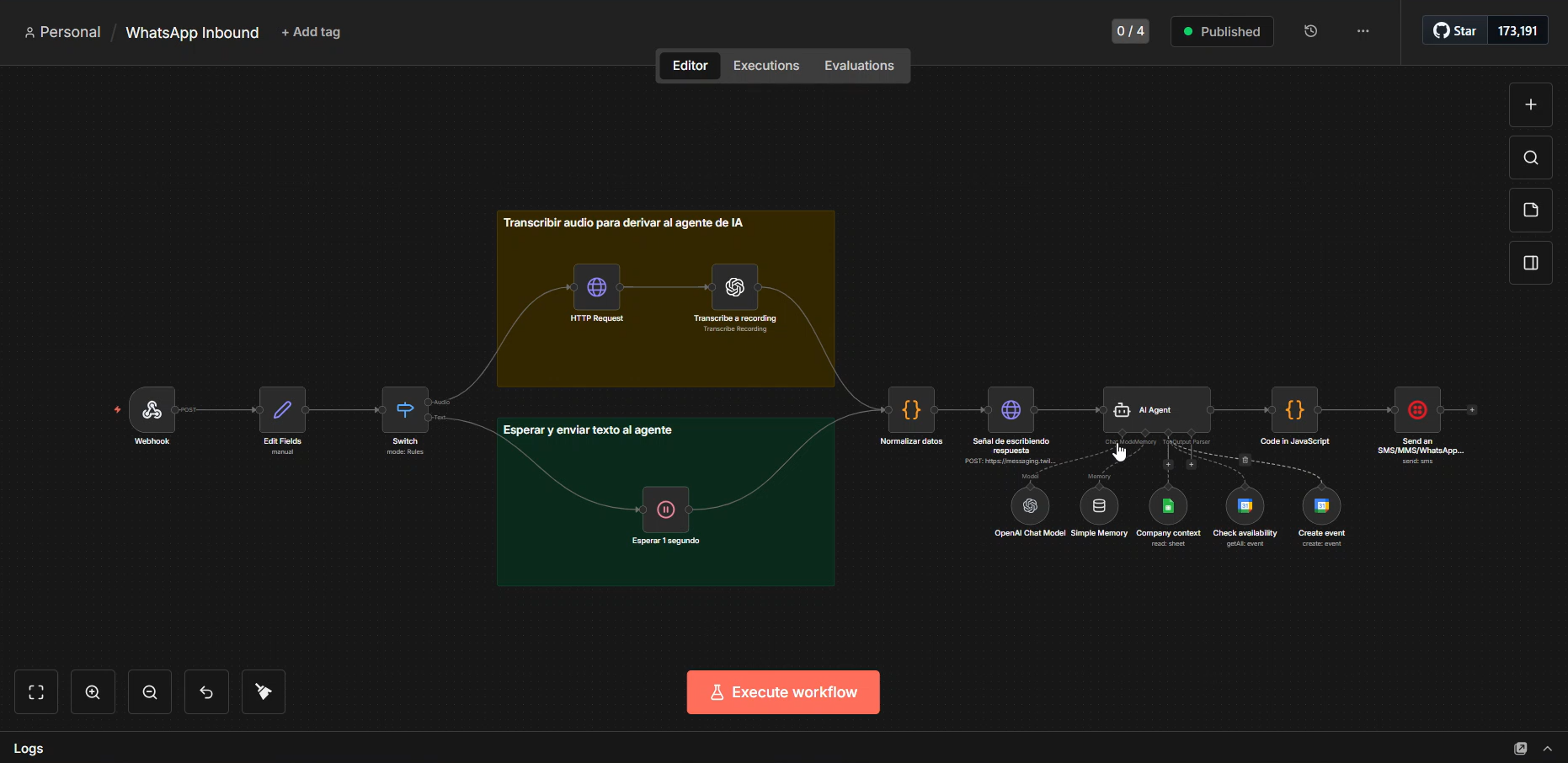

Before diving into details, here is the overall architecture:

WhatsApp (Twilio) → Webhook → Input normalization → Audio/Text branching → Transcription (if needed) → AI Agent (tools + memory) → Output parser → WhatsApp response

Key principle:

The LLM is only one component inside a larger system.

Why Architecture Matters More Than the Model

A conversational agent that schedules appointments must respect real constraints:

- calendar events represent occupied time

- business data must come from structured sources

- conversations require state

- voice and text must behave identically from the agent perspective.

Without solving these first, the model becomes unreliable regardless of how good it is.

Step 1: Webhook Entry (Twilio → n8n)

All incoming messages arrive via webhook.

Example node configuration:

{

"type": "n8n-nodes-base.webhook",

"parameters": {

"httpMethod": "POST",

"path": "your-webhook-id"

},

"name": "Webhook"

}

Design decision:

Never let downstream nodes depend on raw webhook structure. Normalize early.

Step 2: Data Normalization

We convert the incoming payload into a stable internal format:

- type (audio or text)

- body

- from

- recording URL

{

"type": "n8n-nodes-base.set",

"name": "Edit Fields",

"parameters": {

"assignments": {

"assignments": [

{ "name": "type", "value": "={{ $json.body.MessageType }}" },

{ "name": "body", "value": "={{ $json.body.Body }}" },

{ "name": "from", "value": "={{ $json.body.From }}" },

{ "name": "recording", "value": "={{ $json.body.MediaUrl0 }}" }

]

}

}

}

This matters because the rest of the system becomes independent from provider-specific formats.

Step 3: Branching Audio vs Text

Audio messages require extra steps:

- download recording

- speech-to-text

- merge back into unified pipeline.

Example switch logic:

{

"type": "n8n-nodes-base.switch",

"name": "Switch",

"parameters": {

"rules": {

"values": [

{ "outputKey": "Audio", "conditions": [{ "leftValue": "={{ $json.type }}", "rightValue": "audio" }] },

{ "outputKey": "Text", "conditions": [{ "leftValue": "={{ $json.type }}", "rightValue": "text" }] }

]

}

}

}

Important principle:

The AI agent should never know whether input was audio or text.

Step 4: Audio Processing (Download + Transcribe)

Voice notes are fetched from Twilio servers and transcribed using OpenAI.

{

"type": "n8n-nodes-base.httpRequest",

"name": "HTTP Request",

"parameters": {

"url": "={{ $json.recording }}",

"authentication": "predefinedCredentialType",

"nodeCredentialType": "twilioApi"

}

}

{

"type": "@n8n/n8n-nodes-langchain.openAi",

"name": "Transcribe a recording",

"parameters": {

"resource": "audio",

"operation": "transcribe",

"options": { "language": "es" }

}

}

Key insight:

Normalize inputs BEFORE reaching the agent. Otherwise prompts become unnecessarily complex.

Step 5: Input Unification (Critical Node)

Both branches merge into a single standardized structure:

const base = { ...$('Edit Fields').first().json };

const normalizedBody = (base.type === 'audio')

? ($input.first().json.text ?? '').trim()

: (base.body ?? '');

return [{

json: {

...base,

body: normalizedBody,

from: base.from.split('whatsapp:')[1]

}

}];

This dramatically reduces downstream complexity.

Step 6: UX Detail Most Tutorials Skip

Before generating the response, we send a “typing” indicator via Twilio through an HTTP Request node.

{

"type": "n8n-nodes-base.httpRequest",

"name": "Señal de escribiendo respuesta",

"parameters": {

"method": "POST",

"url": "https://messaging.twilio.com/v2/Indicators/Typing.json",

"bodyParameters": {

"parameters": [

{ "name": "messageId", "value": "={{ $('Webhook').item.json.body.MessageSid }}" },

{ "name": "channel", "value": "whatsapp" }

]

}

}

}

Small change, huge perceived intelligence. Users feel like they are interacting with a real system rather than automation.

Step 7: The AI Agent (Where, in my opinion, most people oversimplify)

The agent is composed of:

Model

GPT-4.1-mini with structured output schema. Currently using a mini model because it's going to scale in terms of saving without having to think for too much time on information it already has.

Memory

Session keyed by phone number.

Window limited to last interactions to avoid uncontrolled growth.

Tools

- Company context (Google Sheets)

- Calendar availability lookup

- Calendar event creation.

Key design rule:

The model does not invent data. It queries tools.

Example Model Configuration

{

"model": "gpt-4.1-mini",

"response_format": "json_schema"

}

Structured output prevents downstream failures.

Step 8: Availability Validation Logic

One fundamental constraint:

Calendar events represent occupied time, not availability.

The system must:

- fetch events

- compute free slots

- propose only valid times.

This single rule prevents most real-world booking failures.

Step 9: Defensive Output Parsing

Agents don’t always return identical output structures.

Instead of trusting the response blindly, we parse defensively:

function tryParseJson(output) {

try { return JSON.parse(output); } catch { return null; }

}

Production systems assume variability.

Step 10: Sending the Response via WhatsApp

Final node sends the AI-generated message back using Twilio. Simple, but only after validation and formatting layers.

{

"type": "n8n-nodes-base.twilio",

"name": "Send an SMS/MMS/WhatsApp message",

"parameters": {

"toWhatsapp": true,

"to": "={{ $('Normalizar datos').item.json.from }}",

"message": "={{ $json.message }}"

}

}

Lessons Learned

- AI assistants are architecture problems more than AI problems.

- Tool design matters more than prompt engineering.

- Input normalization reduces complexity dramatically.

- Memory design defines reliability.

- UX details like typing indicators increase trust.

Conclusion

AI agents are not chatbots with better prompts.

They are systems composed of:

- structured inputs

- tool-driven reasoning

- constrained execution.

If you are building something similar and want help moving from prototype to production, feel free to reach out.

Ready to automate your customer conversations?

Contact me