About the author

Gonzalo Gomez

AI & Automation Specialist

I design AI-powered communication systems. My work focuses on voice agents, WhatsApp chatbots, AI assistants, and workflow automation built primarily on Twilio, n8n, and modern LLMs like OpenAI and Claude. Over the past 7 years, I've shipped 30+ automation projects handling 250k+ monthly interactions.

Subscribe to my newsletter

If you enjoy the content that I make, you can subscribe and receive insightful information through email. No spam is going to be sent, just updates about interesting posts or specialized content that I talk about.

Introduction

90% of the WhatsApp bots I audit have the same failure mode. The AI agent tells the user their conversation is being transferred to a human. Then nothing happens. The user keeps typing. The AI keeps responding. At some point the user realizes the ""transfer"" was a lie, gets frustrated, and leaves. You just lost a potential client because of a missing `if` statement.

This is not a prompt engineering problem. It is an architecture problem. The AI was never wired to actually hand off anything. It just said it would.

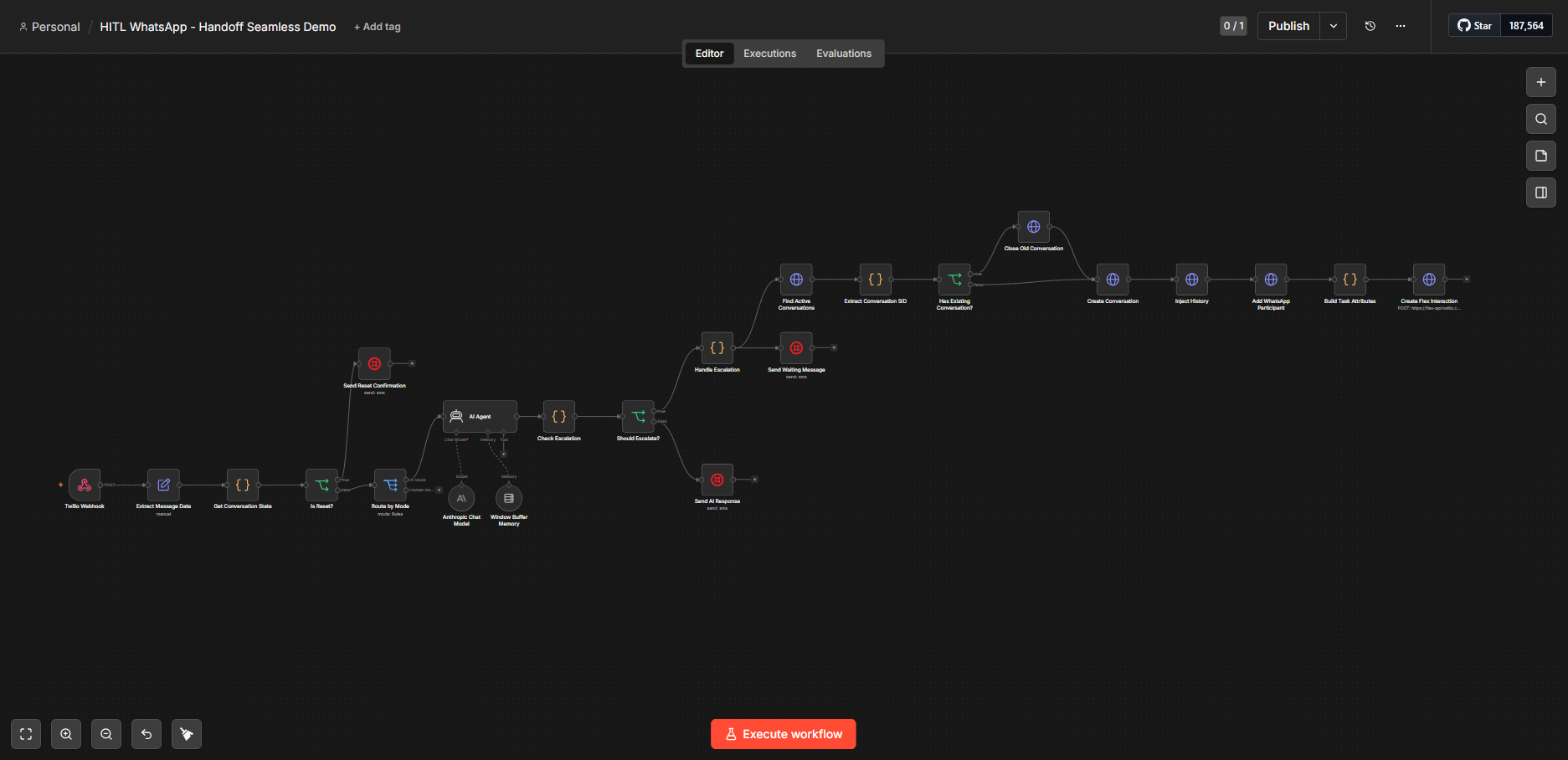

I built a system that fixes this using N8N as the orchestration layer, Claude Sonnet as the AI model, and Twilio Flex as the human agent platform. The goal: AI handles 80% of conversations autonomously, and the remaining 20% (sensitive complaints, out-of-scope questions, explicit requests for a human) get escalated with full conversation history intact.

Here is how the architecture works and what I would change about it.

How the flow is structured

When a message comes in, Twilio captures it and dispatches it to a webhook pointing at an N8N flow. The first thing the flow does is extract the message payload (from, to, body, context) and retrieve the current conversation state.

Conversation state is the key data structure here. It tells you whether this phone number has previous messages, what those messages were, and critically, what mode the conversation is in. The two modes are `ai` and `human`. By default, every new conversation starts in `ai` mode.

Once you have the state, you route by mode:

- ai mode: the message goes to the Claude Sonnet node, which builds a response based on the system prompt and conversation history.

- human mode: the AI is bypassed entirely. The message gets routed to Twilio Flex for a human agent to handle.

The mode switch is what makes the whole thing work. Without it, the AI keeps responding even after escalation, and you end up sending two consecutive replies to the client: one from the bot, one from the human agent. That is a bad experience and also a signal that your system has no internal state.

Escalation detection

The AI node has a specific instruction at the end of the system prompt: if the conversation requires escalation, append the tag `[ESCALAR]` to the response.

This is intentionally deterministic. I am not asking the model to call a function or make a routing decision through reasoning. I am asking it to append a string, and

then I am checking for that string downstream with a simple boolean evaluation.

IF response.includes(""[ESCALAR]"") → shouldEscalate = true

If `shouldEscalate` is false, the flow strips the tag (or it never appeared), builds the outbound WhatsApp message, and sends it. Conversation stays in `ai` mode.

If `shouldEscalate` is true, the flow does the following:

1. Extracts the last 10 messages as context (we can define if we want the last 10, 15, 20, or full conversation through parameters)

2. Sends the client a holding message (""Your conversation is being transferred to our team"")

3. Starts the Twilio Flex pipeline

The Twilio Flex pipeline

Twilio Flex is a contact center platform that handles multi-channel conversations: voice, SMS, WhatsApp, Instagram, Facebook. In this architecture it is the surface where human agents receive and respond to escalated conversations.

The Flex pipeline does three things in sequence.

First, it checks for existing active conversations between this phone number and any agent. If one exists, it closes it. This is a Twilio Flex constraint: conversations must be in a `new` state for a human agent to accept them. You cannot reopen an active conversation and assign it; you have to recreate it.

Second, it creates a new conversation and injects the message history. The history is not just a dump of raw messages. It is structured so the receiving agent can read it as a coherent thread, not a JSON blob.

Third, it adds participants (the client as originator, the agent as recipient), builds a task with routing attributes (workspace SID, workflow SID, channel set to WhatsApp, initiated by customer), and creates what Twilio calls an Interaction. This is what surfaces in the Flex console as an incoming task that an agent can accept.

Once the agent accepts the task in the Flex UI, they see the full conversation history. They reply directly from the console. Those replies go back to the client over WhatsApp. The AI is no longer involved because the mode was flipped to `human` at the moment of escalation.

Here is how the conversation looked in a real test session:

- Client asks where the clinic is located → AI responds with address and services

- Client asks for specialties and opening hours → AI responds with full schedule

- Client says they want to speak with a human → AI sends transfer message, mode flips, task appears in Flex

- Human agent accepts: ""Hi, good afternoon, I'm Gonzalo, how can I help you?""

- Client requests a dermatology appointment for Tuesday

- Agent responds, closes the conversation

From that point on, the AI does not touch the thread. The client gets a reply from a person with full context of everything that was said before.

Two things I would change

This flow was built as a proof of concept for a specific client use case. They wanted the conversation to live entirely in N8N and did not need long-term history storage, since confirmed clients were handled in a completely separate flow.

First: the conversation state storage. Right now I am using `$getWorkflowStaticData()`, which means N8N itself is the source of truth for conversation history. That works until you restart the instance, at which point all state is gone. The node should be swapped for a proper database, a Redis store, a Postgres table, or even a Google Sheets if the volume is low enough. Anything external to the N8N runtime.

Second: the mode reset mechanism. Currently the only way to move a conversation from `human` mode back to `ai` mode is to send the message `reset`, which triggers a debug branch in the flow. That exists purely for development convenience. For this client it was intentional: once escalated, the conversation stays human forever. But in a production system that serves multiple use cases, you would want a proper mechanism, maybe a timeout, an agent-triggered command, or a close-and-reopen pattern that resets the mode cleanly.

On the pattern itself

What I just described is called human-in-the-loop. It is one of the fundamental patterns in agentic system design, and it is also one of the things that looks simple until you actually implement it.

The hard part is not detecting when to escalate. The hard part is that when you hand off to a human, the context has to travel with the conversation. If it does not, the human agent is starting from scratch, the client has to repeat themselves, and you have introduced a friction point that is worse than just not having the AI at all.

The `[ESCALAR]` tag approach works well at this scale. For higher volume systems I would consider a more structured output from the model, something like a JSON field for `escalate: true/false`, rather than string parsing. It is more robust to prompt drift.

The mode system is what keeps the AI from double-firing after handoff. Without explicit state tracking, you are relying on the model to remember it already escalated. It will not. Models do not have that kind of persistence. The mode field does.

-Gonza

Ready to automate your customer conversations?

Contact me